Neurons send visual information to the brain, but just how it gets processed is a complexity that researchers are getting closer to understanding.

By Elisabeth Moore

Does seeing something new have a bigger effect on you than something you have seen many times before? We tend to notice parts of our environment that we have not yet experienced. We also tend to tune out things that are familiar. You may notice that you perceive your surroundings more vividly on a vacation to a new place, compared to an area near where you live. This effect is also occurring in the neurons responsible for visual perception in your brain. Visual neuroscientists have long known that new, or novel, images increase neural signaling in the brain. But does this happen because there is less activity in visual parts of the brain when something is familiar? Or is it more complicated than that?

RELATED: Neuromodulation: How We Manipulate Brain Cells

Of Neurons and Images

When we see an image in our visual field, our brain’s visual system is activated. The neurons begin firing in patterns to recreate the light coming into the retina. However, humans do not see an exact replica of the world like a camera would. Instead, the brain builds together visual images piece by piece. While it is rebuilding the image, the brain adjusts how we see those pieces based on what may be more or less important, previous experience, and many other factors. This is visual perception, where what we perceive is not the exact equivalent of the world around us. You may notice something the person next to you does not, even though you are both right in front of it. Visual perception begins with neurons signaling in response to light and dark contrasts, due to the edges in an image. Neurons in the very early stages of our visual system, in a part of the thalamus called the LGN, begin this process. These edges of light are then pieced together into orientations, by activating neurons in a brain area called V1. These neurons then signal in patterns that form curvatures and shapes, which activate neurons in area V4. Eventually, these shapes form the objects that we put into meaningful categories, activating a brain area called inferior temporal cortex (IT). IT neurons signal in response to a variety of objects, faces, and scenes. And familiarity influences how the neurons in IT respond to such images.

So, not all images are treated equally by the brain. An object which is novel creates more signaling in IT neurons, compared to when the same image becomes familiar. Originally, this was thought to happen through a mechanism called repetition suppression. Repetition suppression proposed that IT is less active if an object is familiar, and more active if it is novel. Viewing an image was thought to lead to overall decreases in neural signaling. However, some researchers questioned whether this hypothesis is the whole story. IT neurons are not only less active if an image is familiar. Factors like contrast or brightness can also cause decreased signaling. If a decrease in activity is the only way that neurons signal familiarity, the brain would have a hard time knowing that a low contrast, but novel, image is not familiar. Yet we know whether an image is familiar, or not, within many settings. In a recent study, Nicole Rust and colleagues hypothesized that there must be a more complicated mechanism behind how IT determines what is novel or familiar.

RELATED: How Our Brains Learn to Use Tools

Neurons in action

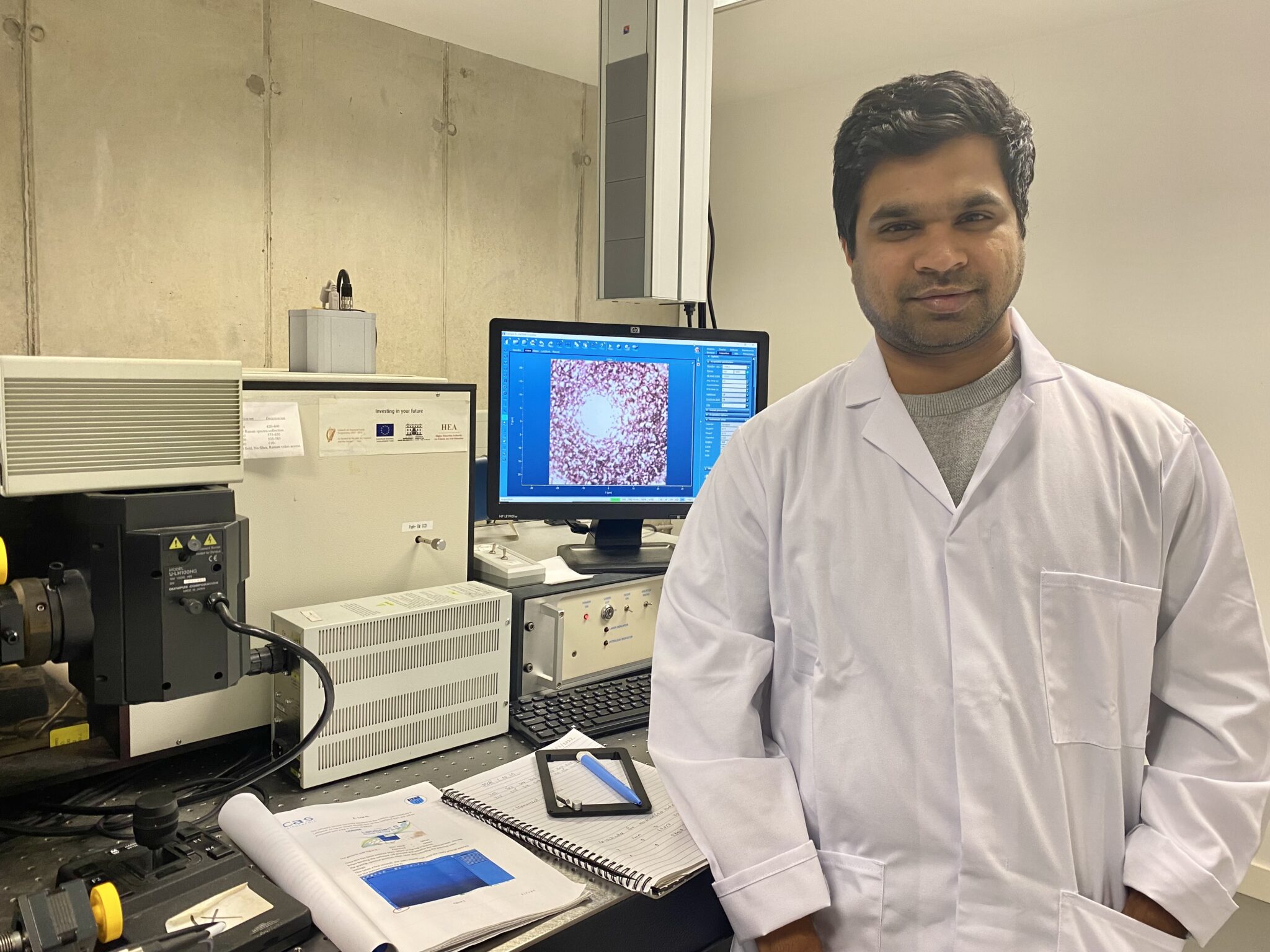

To study this, the researchers designed an experiment where monkeys were trained to report whether they perceived images as familiar or not. The images were presented twice, so that it was first novel, and then familiar. The monkeys were trained to move their eyes, or not, based on whether the image was familiar or not. The contrast of the image also could vary, but the monkeys were trained to ignore this change. The images were grayscale, so there was no change in color. While the monkeys were completing this task, the researchers recorded electrical signals from several hundred individual neurons in IT. The overall scientific question was: how often did each neuron send a signal when the image was familiar, versus novel? And, how does this relate to simple changes to the image, such as contrast differences?

RELATED: How the Placebo Effect “Tricks” the Brain

Individual neurons send out an electrical signal, or fire, in an all or nothing way, when they are activated. An increased rate of firing, also called spikes, means there is more activity. Similarly, a decreased rate of firing indicates less activity. The researchers found that familiarity and contrast both changed the overall firing rate in the neurons studied. This supported the hypothesis that there must be another mechanism by which the brain determines familiarity. Using mathematical calculations, the researchers found that the monkey’s perception of familiarity was explained when contrast was corrected for with a more complex calculation than simple increases in firing. They propose that the brain does not simply increase firing when something is novel and decrease when it is familiar. The brain instead expects a certain level of incoming signaling from a given image, including its contrast level. The brain region IT then subtracts out the signal of familiarity, if there is any, to create the visual perception. In this way, the brain can determine whether an image is novel or familiar. This new theory, called sensory referenced suppression, explains how the brain can tell if an image is familiar, even if low level features, such as contrast in varying lighting settings, change.

RELATED: Brain Games Relieve Motion Sickness

Understanding how the brain signals visual objects, based on whether they are familiar or not, has several applications. Knowing the visual system’s code is particularly important in creating artificial intelligence involving vision. Humans can make perceptual decisions because we can ignore some stimuli and focus on others, including that which is new and may need to be learned or explored. We would be overwhelmed, and our decisions would be inefficient, if we always perceived all the world around us. Artificial intelligence is vastly improved when it can be developed beyond simple recreations of an image. More accurately matching human perception allows artificial intelligence the ability to make more accurate, human-like decisions. Understanding perception and memory is also key to treating problems of memory. In Alzheimer’s disease, for example, a patient may incorrectly think their surroundings are unfamiliar. Understanding how the brain codes for familiarity can help in developing treatments for diseases or disorders where this may be impaired. Ultimately, if we want to be able to treat disorders of human perception, or replicate the brain’s visual system with technology, we need to first understand the underlying mechanisms in healthy brains.

RELATED: What Loneliness Looks Like in the Brain

ALSO RELATED: Touch and Socialization: Researching Connections

This study was published in the peer-reviewed journal Proceedings of the National Academy of Sciences of the United States of America (PNAS).

Reference

Mehrpour, V., Meyer, T., Simoncelli, E. P., & Rust, N. C. (2021). Pinpointing the neural signatures of single-exposure visual recognition memory. Proceedings of the National Academy of Sciences, 118(18), e2021660118. https://doi.org/10.1073/pnas.2021660118

About the Author

Elisabeth Moore lives in Providence, RI with her cat Callie. She completed a PhD in neuroscience at the University of Minnesota in 2019, studying how the brain perceives visual stimuli and learns to see over time. She now manages the creation of plain language summaries for clinical trials and other patient-centered communications of scientific and technical information. Her overall goal is to become a full-time medical and health communications writer. Outside of work and writing, she enjoys camping, hiking, and riding her motorcycle through New England. Connect with Elisabeth on Twitter or Facebook.